Opto-mechanical design and assembly

Introduction

Entangled photon pairs are a key resource for a number of applications of quantum physics, such as quantum teleportation [1] and quantum cryptography [2]. A vital step towards the global-scale implementation of such applications via ground-to-satellite and inter-satellite links is the development of robust, space-proof entangled photon sources (EPS). In a simple description, entangled photons can be considered a pair of particles which are intimately correlated. In fact they are part of the same quantum state: any measurement on one of them affects the state of the other. Photon pairs can be entangled in a number of degrees of freedom (e.g. time, energy, momentum, polarization), whereby polarizationentanglement is the most established for applications in free-space optics. In this case, both photons are anti-correlated in their polarizations, i.e. measuring one particular polarization for one photon yields a perpendicular polarization state for the partner photon – the two photons are entangled irrespectively of their spatial separation (Einstein referred to this as spooky action at a distance). To date the best-developed method for the generation of photon pairs is spontaneous parametric down-conversion (SPDC) in second-order nonlinear crystals. In the SPDC process, a high energy pump beam (=405nm) spontaneously can produce a pair of photons at lower energy (=810nm).

The efficient generation of photon pairs requires very strict characteristics on the illuminating beam, such e.g. its size, shape, and polarization state. For these photon pairs to be entangled in their polarizations there must be a superposition of two distinct pair-generation possibilities each with orthogonal polarized photon pairs. To achieve this with a single nonlinear crystal we have chosen a set-up based on Sagnac interferometer [3]. Thus, beams must travel equal optical paths along both sides and focus on the crystal at the same point.

Opto-mechanical design and environmental considerations

Entangled Photon Source assembled.

The scope of applicability of the present device is the higher handicap. The compatibility of an entangled photon source with space environment is one of the key factors in the development of this kind of source. The design of the device must be compact, robust and efficient.

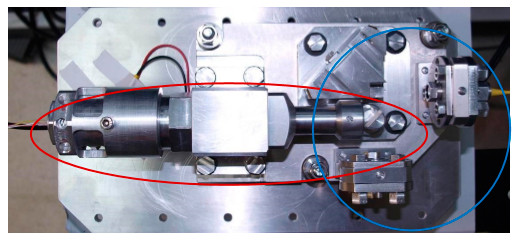

As it can be observed in Fig. 2, the whole device has been made ad-hoc of aluminum and it is highly robust. The total size is 30x17x11 cm. The EPS tube (surrounded by the red ellipse) is place at the left side and the Sagnac structure and pigtailed optical fibers (surrounded by the blue circle) at the right side of Fig. 2.

The complexity of the device is mostly due to robustness imposed by the application. Each optical and mechanical element must be able to be positioned with positioning tolerances below the micrometer limit. Besides, after positioning, all elements must remain without moving more than one micrometer even exposed to the launch acceleration. Therefore, all components must be strongly fixed. In Fig. 3a depicts a detail of the EPS, the Sagnac block and one pigtailed optical fiber holder.

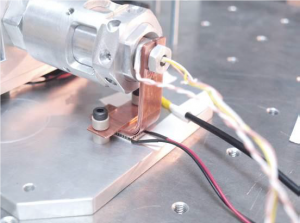

Additionally, temperature variations are also very important. Laser diode emission wavelength is highly dependent on temperature. Two Peltier modules are used to assure stable operation temperature. We show in Fig. 3b the Peltier in charge of controlling the LD temperature. It is connected to the LD by several tapes of copper.

Assembly of the Entangled Photon Source

The assembly of the EPS has been performed by modules. Firstly, the EPS tube was assembled lens by lens until obtaining the desired focal point. The EPS tube must be accurately aligned

Sagnac block and pigtailed optical fiber holder

. In addition, distances between lenses and LD must be exactly those previously calculated. Mobile parts inside the EPS tube are the laser diode (xy-axes), lens L3 (x-axis) and L4 (y-axis). Other optical elements are fixed by the mechanical mounting.

After the assembly of the EPS tube, it has to be joined to the other parts of the device. The Sagnac structure can be divided into three parts: Dichroic mirror, Sagnac block and pigtailed optical fibers. Optical fibers can be assembled at the end of the process but EPS tube, dichroic mirror and Sagnac block must be assembled at once. Due to required robustness, the degrees of freedom to align all elements are limited.

Peltier module for cooling/heating the laser diode

Supposing all parts are aligned in the xy plane, only slight rotations allowed by screws are possible. In addition, all elements are glued to add robustness but diminishing degrees of freedom for alignment.

Alignment of all parts was assisted by illuminating the structure from one of the outputs. Thus, by illuminating through one pigtailed fiber, the beam should go out the Sagnac through the other one. Once this fact is achieved, we may assure the correct alignment of the whole Sagnac structure. After that, the dichroic mirror and the EPS tube can be aligned according to the Sagnac structure.

Conclusions

We have designed, manufactured and assembled an Entangled Photon Source for space applications. A focal spot with the same dimensional characteristics as the one theoretically calculated has been achieved. Mechanical stability of the spot has been proven in laboratory conditions. In addition, global stability including the Sagnac structure has been also proven in laboratory by monitoring the output of the optical fibers during a month. This work establishes a reliable basis for future design and assembly of compact and robust Entangled Photon Sources for space and terrestrial applications.

- LED Technologies & Properties - 2nd February 2016

- Fast optical source for quantum key distribution based on semiconductor optical amplifiers - 1st February 2016

- Low Temperature Radiation Test of High Voltage Optocouplers for Space Applications - 1st February 2016